philosophy

On the use of LLM (“AI”) in security decisions

- Albert Zenkoff

- 2026-03-29

- Technology, Artificial Intelligence, LLM, long-term view, perspective, philosophy, security, technology

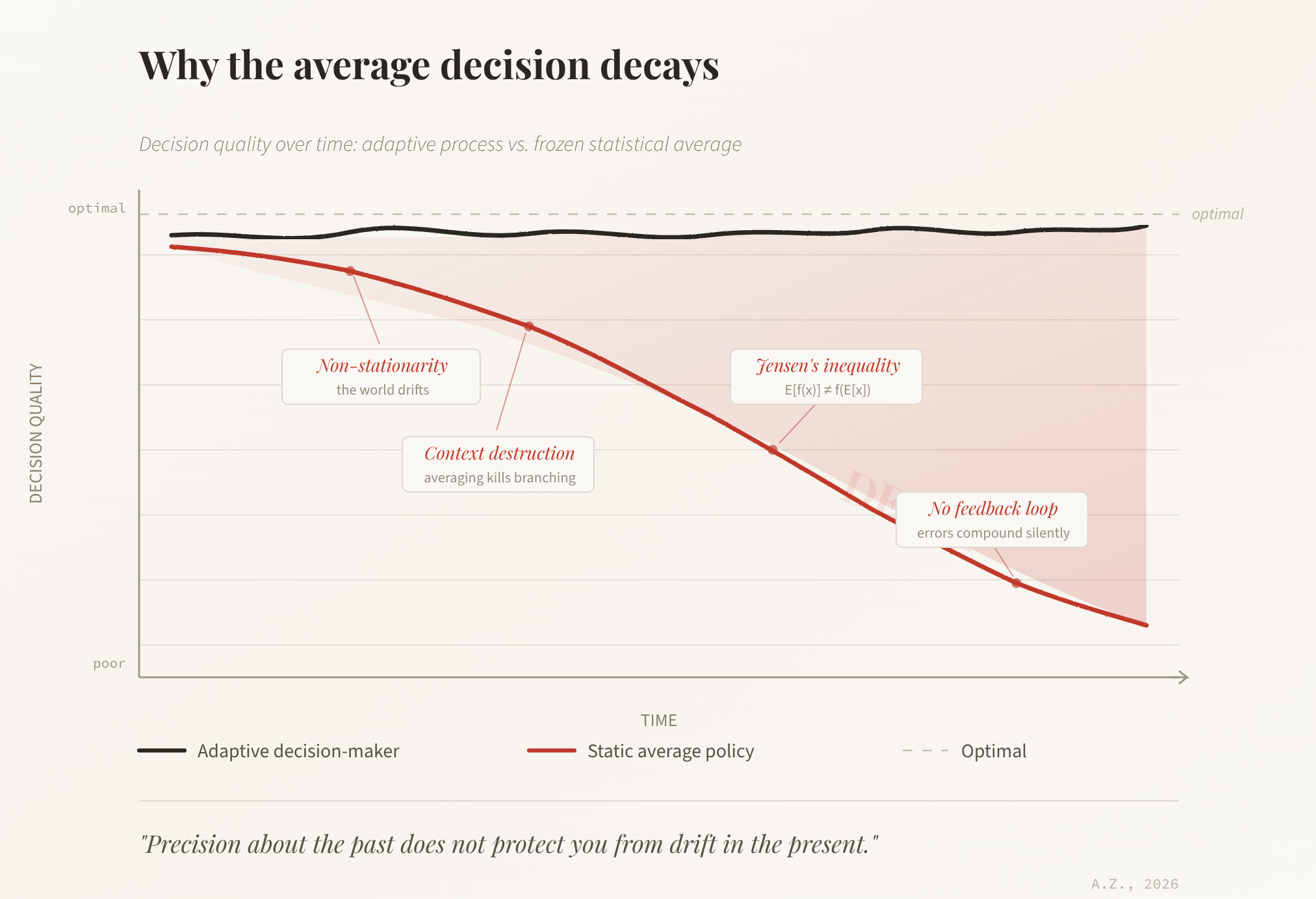

What will happen if we keep using Large Language Models as the basis of decisions, especially in a field like security? Would taking the statistically average decision from this moment on, at every step, lead to a decay of decision correctness over time, or would it stay stable? The question sits at the intersection of ...

Read MoreWorst languages for software security

- Albert Zenkoff

- 2016-01-19

- Technology, C/C++, Development, Java, languages, misleading, OWASP, philosophy, problem, quality, security promise, vulnerability, web application

I was sent an article about program languages that generate most security bugs in software today. The article seemed to refer to a report by Veracode, a company I know well, to discuss what software security problems are out there in applications written in different languages. That is an excellent question and a very interesting ...

Read MoreThe human factor: philosophy and engineering

- Albert Zenkoff

- 2015-04-22

- Management, arete, blind men and an elephant, engineering, excellence, holistic approach, long-term view, philosophy, quality, security, systemic approach, value, virtue

The ancient Greeks had a concept of “aretê” (/ˈærətiː/) that is usually loosely translated to English as “quality”, “excellence”, or “virtue”. It was all that and more: the term meant the ultimate and harmonious fulfillment of task, purpose, function, or even the whole life. Living up to this concept was the highest achievement one could ...

Read MoreSecurity Forum Hagenberg 2015

- Albert Zenkoff

- 2015-03-29

- Society, announcement, Austria, Hagenberg im Mühlkreis, human factor, philosophy, presentation, public, security, Security Forum, talk

I will be talking about the philosophy in engineering or the human factor in the development of secure software at the Security Forum in Hagenberg im Mühlkreis, Austria on 22nd of April. https://www.securityforum.at/en/ My talk will concentrate on the absence of a holistic, systemic approach in the current software development as a result of taking ...

Read MoreGoogle bots subversion

- Albert Zenkoff

- 2013-11-09

- Technology, application security, attack, bots, Development, firewall, Google, philosophy, secure by design, software, software design, software security, subversion, technology, unexpected

There is a lot of truth in saying that every tool can be used by good and by evil. There is no point in blocking the tools themselves as the attacker will turn to new tools and subvert the very familiar tools in unexpected ways. Now Google crawler bots were turned into such a weapon ...

Read MoreSecurity Assurance vs. Quality Assurance

- Albert Zenkoff

- 2013-10-02

- Management, Development, philosophy, process, Quality Assurance, Security Assurance, software design, software security

It is often debated how Quality assurance relates to Security assurance. I have a slightly unconventional view of the relation between the two. You see, when we talk about the security assurance in software, I view the whole process in my head end to end. And the process runs roughly like this: The designer has ...

Read MoreUser Data Manifesto

- Albert Zenkoff

- 2013-09-20

- Society, cloud, control access, general, initiative, manifesto, own data, philosophy, privacy, private data, storage, transparency

Having a confirmation that the governments spy on people on the Internet and have access to the private data they should not sparked some interesting initiatives. One of such interesting initiatives is the User Data Manifesto: 1. Own the data The data that someone directly or indirectly creates belongs to the person who created it. ...

Read MoreApple – is it any different?

- Albert Zenkoff

- 2013-03-30

- Society, costs, economics, externalities, general, philosophy, security investment, security strategy, short-term gain, usability

The article “Password denied: when will Apple get serious about security?” in The Verge talks about Apple’s insecurity and blames Apple’s badly organized security and the absence of any visible security strategy and effort. Moreover, it seems like Apple is not taking security sufficiently seriously even. “The reality is that the Apple way values usability over ...

Read MoreSecurity training – does it help?

- Albert Zenkoff

- 2013-03-28

- Management, awareness, draconian security, general, management, philosophy, rare risk ignorance, risk, risk awareness, security education, security training, training

I came across the suggestion to train (nearly) everyone in the organization in security subjects. The idea is very good, we often have this problem that the management has absolutely no knowledge or interest in security and therefore ignores the subject despite the efforts of the security experts in the company. Developers, quality, documentation, product ...

Read MoreBrainwashing in security

- Albert Zenkoff

- 2013-02-28

- Society, corporate, general, greedy, irresponsible, Lies, philosophy, RSA Conference, security, social

At first, when I read the article titled Software Security Programs May Not Be Worth the Investment for Many Companies I thought it was a joke or a prank. But then I had a feeling it was not. And it was not the 1st of April. And it seems to be a record of events ...

Read More