technology

On the use of LLM (“AI”) in security decisions

- Albert Zenkoff

- 2026-03-29

- Technology, Artificial Intelligence, LLM, long-term view, perspective, philosophy, security, technology

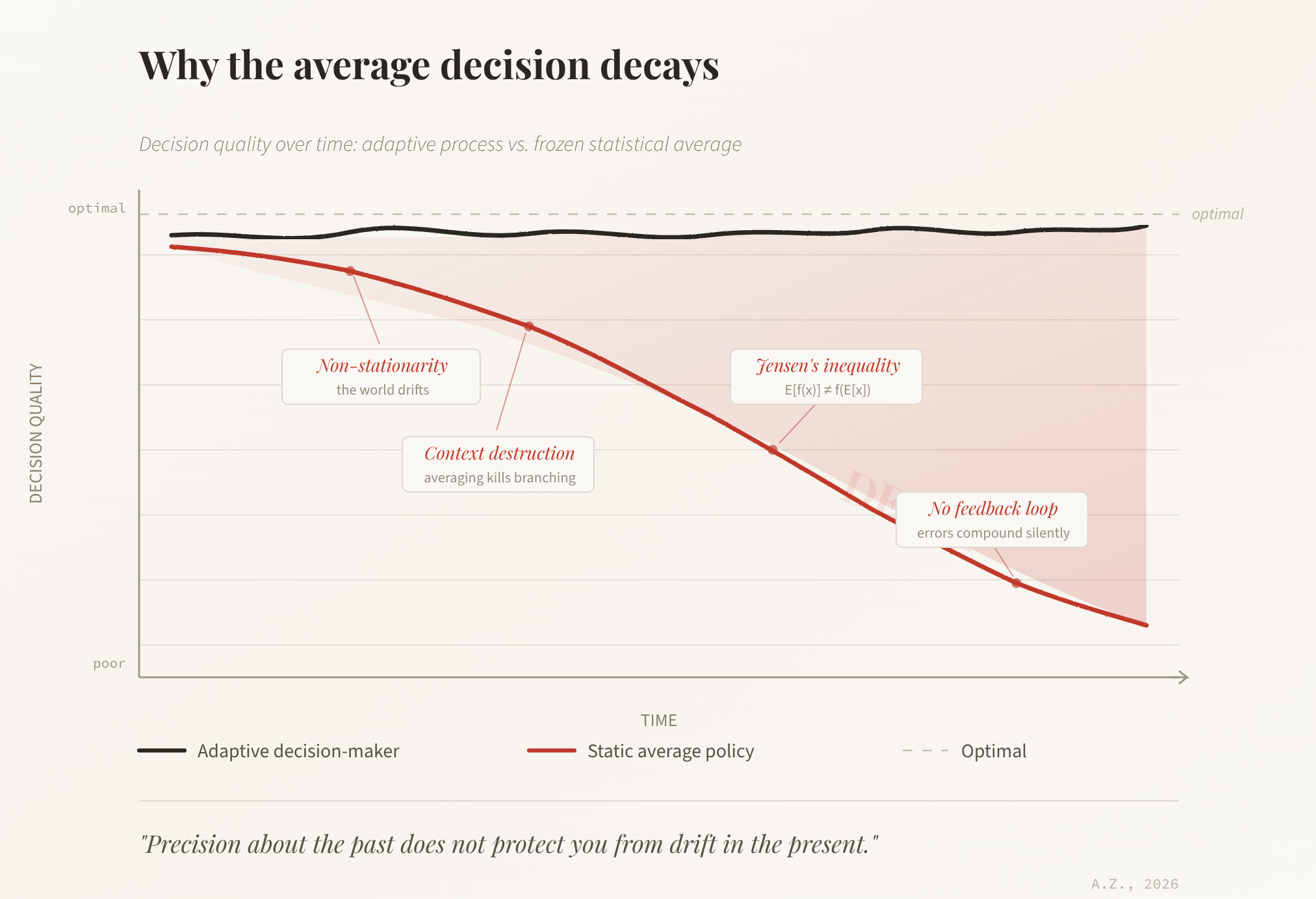

What will happen if we keep using Large Language Models as the basis of decisions, especially in a field like security? Would taking the statistically average decision from this moment on, at every step, lead to a decay of decision correctness over time, or would it stay stable? The question sits at the intersection of ...

Read MoreAI Software Design Security and Language Choice

- Albert Zenkoff

- 2025-11-30

- Technology, Development, technology

Or, rather, the quality of the Artificial Intelligence (AI) generated software in general but it goes for security as well, naturally, since security and quality are but the two sides of the same coin. Software engineers happily embraced the wonderful possibility of being able to program in a natural language – describing the task to ...

Read MoreOver-engineering

- Albert Zenkoff

- 2014-04-13

- Technology, bugs, code size, coding techniques, complexity, keep it simple, kiss, maintainability, management, over-engineering, overengineering, show off, technology

Causes for security problems are legion. One of the high pertinence problems in software development is called “over-engineering” – creation of over-complicated design or over-complicated code not justified by the complexity of the task at hand. Often it comes as a result of the designer’s desire to show off, to demonstrate the knowledge of all ...

Read MoreCamera and microphone attack on smartphones

- Albert Zenkoff

- 2013-11-13

- Technology, attack, attack path, camera, microphone, mobile, PIN, security, side-channel, smart phone, technology

The researches at the University of Cambridge have published a paper titled “PIN Skimmer: Inferring PINs Through The Camera and Microphone” describing a new approach to recovering PIN codes entered on a mobile on-screen keyboard. We had seen applications use the accelerometer and gyroscope before to infer the buttons pressed. This time, they use the ...

Read MoreGoogle bots subversion

- Albert Zenkoff

- 2013-11-09

- Technology, application security, attack, bots, Development, firewall, Google, philosophy, secure by design, software, software design, software security, subversion, technology, unexpected

There is a lot of truth in saying that every tool can be used by good and by evil. There is no point in blocking the tools themselves as the attacker will turn to new tools and subvert the very familiar tools in unexpected ways. Now Google crawler bots were turned into such a weapon ...

Read MoreCloud security

- Albert Zenkoff

- 2013-04-27

- Technology, cloud, cloud security, data center, database, file server, mainframe, security, security models, security types, technology, virtual, VM, web server

Let’s talk a little about the very popular subject nowadays – the so-called ‘cloud security’. Let’s determine what it is, what we are talking about, in fact, and see what may be special about it. ‘Cloud’ – what is it? Basically, the mainframes have been doing ‘cloud’ all along, for decades now. Cloud is simply ...

Read MoreExodus from Java

- Albert Zenkoff

- 2013-04-09

- Technology, coding techniques, Development, false promise, general, inherent security, Java, languages, programming, secure software, sloppy, technology

Finally the news that I was subconsciously waiting for: the exodus of companies from Java has started. It does not come as a surprise at all. Java has never fulfilled the promises it had at the beginning. It did not provide any of the portability, security and ease of programming. I am only surprised it ...

Read MoreCommon passwords blacklist

- Albert Zenkoff

- 2013-01-09

- Technology, blacklist, brute force attack, general, Password, password database, password verification, passwords, rules, technology

Any system that implements password authentication must check whether the passwords are not too common. Every system faces the brute-force attacks that try one or another list of most common password (and usually succeed, by the way). The system must have a capability to slow down an attacker by any means available: slowing down system ...

Read MoreCryptography: just do not!

- Albert Zenkoff

- 2012-10-16

- Technology, Algorithm, Cryptography, Development, don't, encryption, hashing, homegrown, philosophy, software, technology

Software developers regularly attempt to create new encryption and hashing algorithms, usually to speed up things. There is only one answer one can give in this respect: Here is a short summary of reasons why you should never meddle in cryptography. Cryptography is mathematics, very advanced mathematics There are only a few good cryptographers and ...

Read MoreRandom or not? That is the question!

- Albert Zenkoff

- 2012-09-13

- Technology, layered security, PRNG, Pseudorandom Numbers, random, random number generator, RNG, security, separation by function, technology

Oftentimes, the first cryptography related question you come across while designing a system is the question of random numbers. We need some random numbers in many places when developing web applications: identifiers, tokens, passwords etc. all need to be somewhat unpredictable. The question is, how unpredictable should they be? In other words, what should be ...

Read More